Big Brother’s Playbook

DHS efforts to curb "dangerous" speech

The federal government’s guidelines to counter “misinformation” and “disinformation” – what we might more accurately describe as Big Brother’s Playbook – has been released. The US government and its allies, including media gatekeepers and educational institutions and global corporations, have become the judges and juries of “misinformation.”

And Big Tech is their executioner.

According to recently-filed court documents in Missouri v. Biden that were made available by The Intercept (big hat tip to Lee Fang and Ken Klippenstein), “The Department of Homeland Security is quietly broadening its efforts to curb speech it considers dangerous.”

We’ve highlighted the key points of these court documents with screenshots below. Read it all - it’s especially relevant to the 2022 and 2024 elections.

DHS is “directly engaging with social media companies to flag MDM” (MDM is defined as mis-information, dis-information, and mal-information). It is doing so “Ahead of midterm elections in 2022” and is readying its efforts with eyes focused on 2024:

DHS is questioning how to “inspire innovators to partner with the government” without this “being seen as government ‘propaganda’”.

The “rapid response team would need to surge for short periods of time around elections.”

It recognizes the system’s vulnerabilities to what it considers disinformation: “The spread of false and misleading information poses a significant risk to critical functions like elections, public health, financial services, and emergency response.”

The DHS focus on disinformation isn’t limited to elections. It is recommended to focuses on misinformation “that undermines critical functions carried out by other key democratic institutions, such as the courts, or by other sectors such as the financial system, or public health measures.”

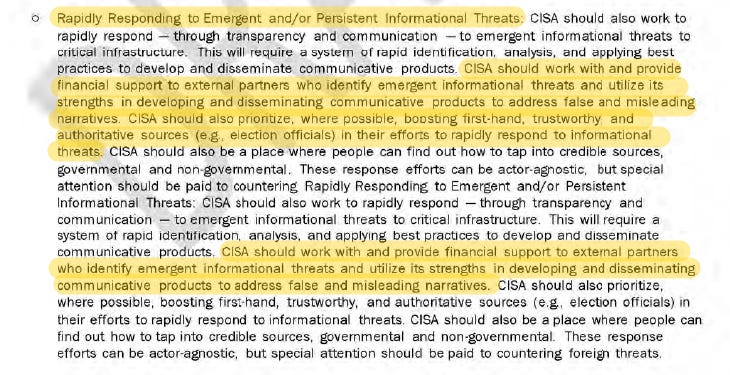

DHS has recognized four specific dimensions of disinformation to counter. It is recommended to: (1) Build a society resilient to misinformation/disinformation; (2) Proactively address anticipated misinformation/disinformation threats; (3) Rapidly responding to emergency or persistent informational threats; and (4) Countering actor-based threats.

The DHS plan would “provide financial support” to non-governmental partners who counter “false and misleading narratives.” In other words, US government contractors who would receive funding to suffocate anti-government narratives.

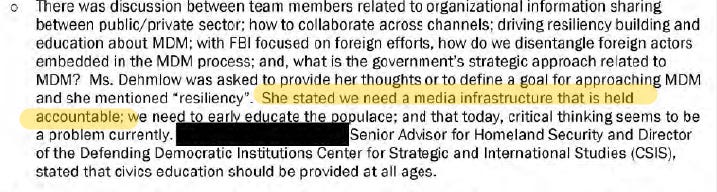

There was discussion that “we need a media infrastructure that is held accountable.”

Like I said, their focus is on you. One participant defined the organization’s goal as “to reduce Americans’ engagement with MDM” (mis-information, dis-information, and mal-information).

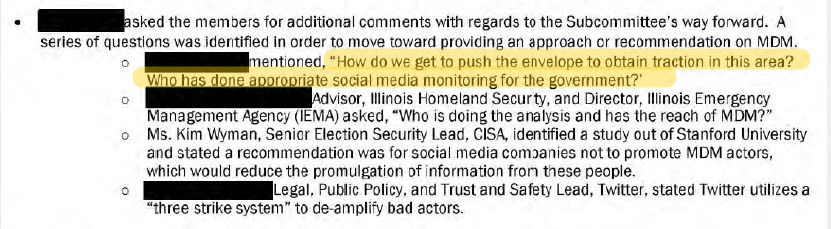

One member demanded social media monitoring by the government: “How do we get to push the envelope to obtain traction in this area? Who has done appropriate social media monitoring for the government?”

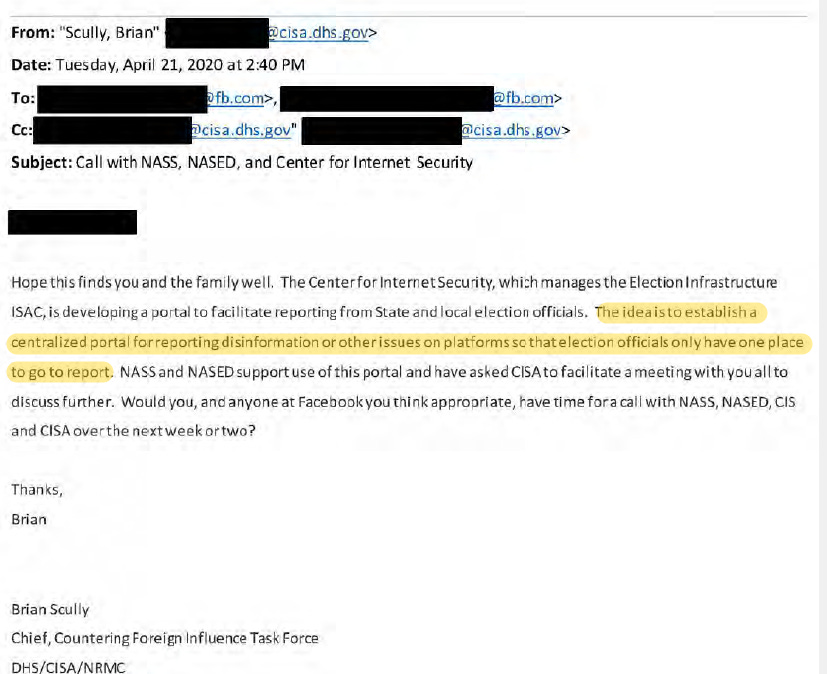

Then there are the e-mails. No surprise that Facebook and DHS were actively monitoring “election misinformation”. Here’s one such example:

Finally, there are the logistical issues. There were discussions that local officials will utilize a centralized DHS portal for “reporting disinformation or other issues on platforms”, after which the “disinformation” would theoretically be reported to any applicable social media/tech companies. The real-time elimination of election-related questions.

Then there was the disclosure of e-mails between the tech platforms and the Biden Administration discussing the flagging or removal of content that questioned COVID-19 vaccines.

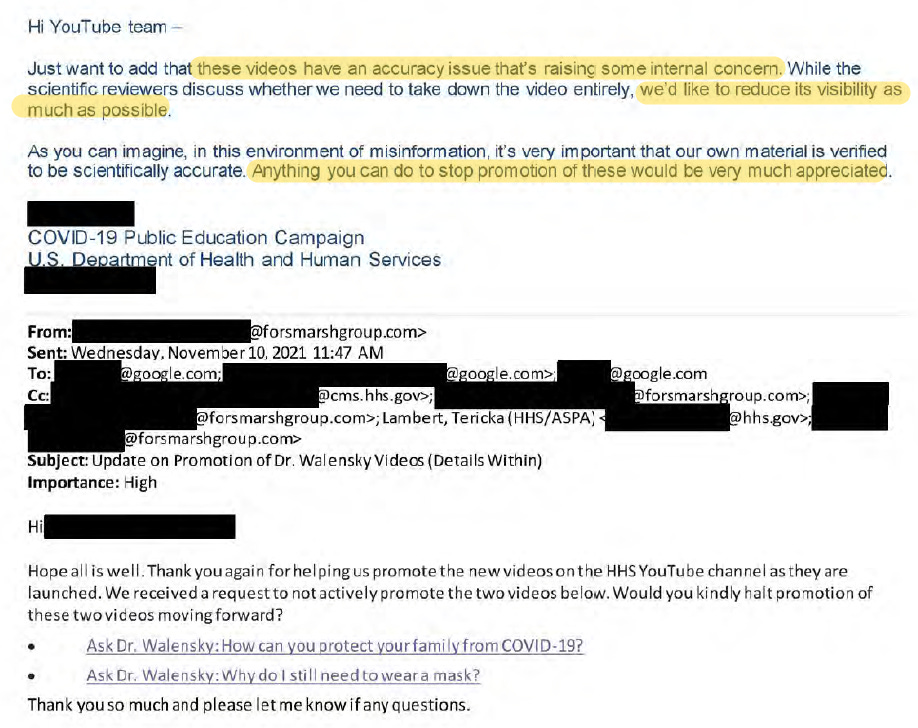

E-mails from HHS to marketing contractors and Google to hide videos of CDC Director Rochelle Walensky due to having an “accuracy issue.”

I hope the dangers of these programs and initiatives are apparent. It would be the federal government and its partners who would define misinformation. These aren’t neutral observers; rather, their power relies on a narrative. Perceived illegitimacy is a risk.

In other words, it’s the belief that’s the threat, not the “misinformation” by itself. Thus, the real targets are those who listen and read and watch. Beliefs are shaped by limiting what they can see. Better to keep those dangerous ideas out of public view.

My guess is the depositions about to happen in Missouri v. Biden has them panicked. That's the reason for The Atlantic "can't we just let bygones be bygones" piece and the DHS document leak to The Intercept. This is their M.O. when really bad information is about to drop. They get ahead of it by leaking it to some leftie publication in order to shape the narrative.

No way The Intercept publishes this without the higher ups in the intel community giving them the green light. This is the same publication that Glenn Greenwald was forced to leave because they quashed his reporting of the Hunter Biden laptop. The publication he started himself. They're state-run media now. They're Pravda.

Begs the question, dangerous to who?